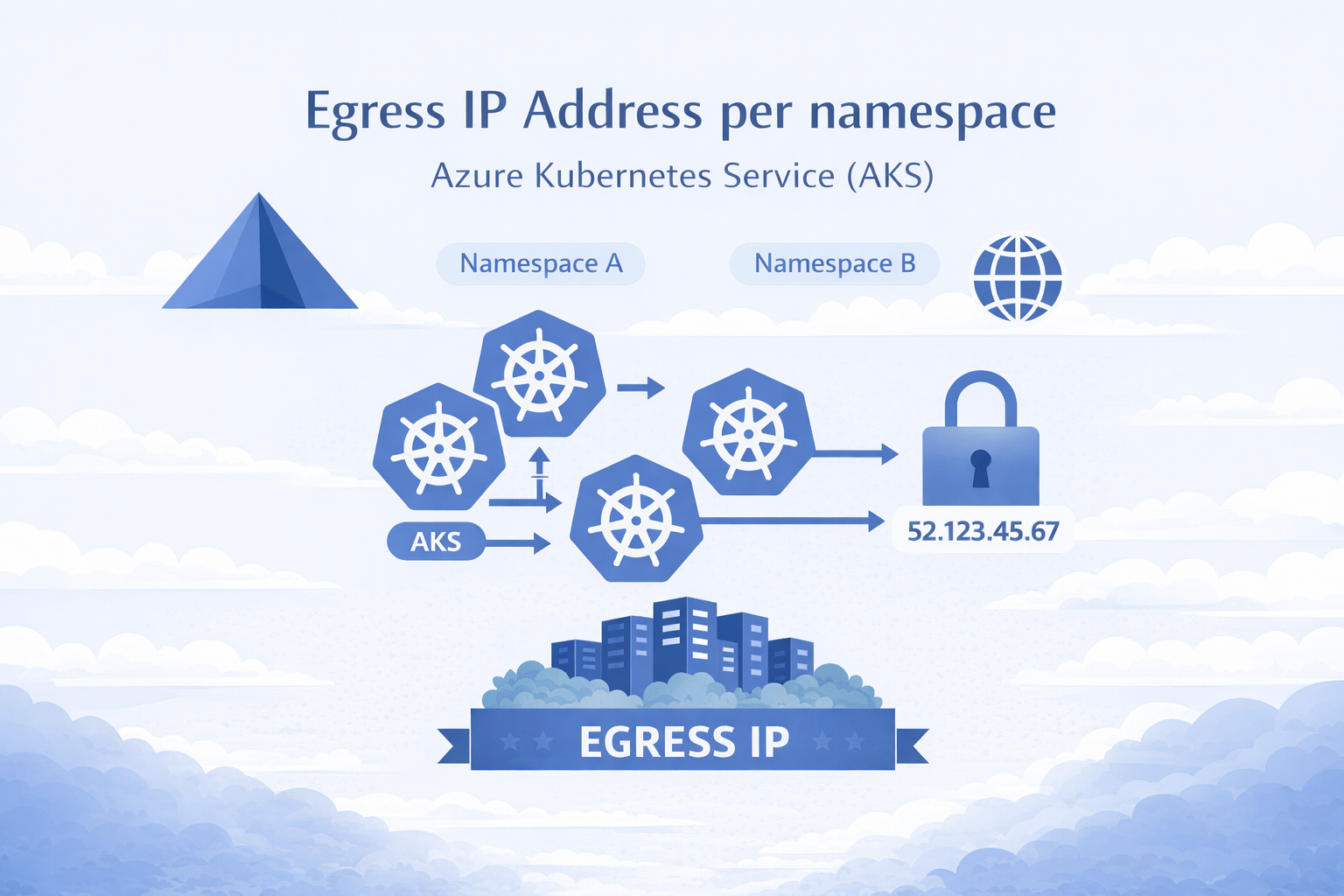

AKS Static Egress Gateway: Per-Namespace Static Egress IPs

🎯 TL;DR: Unique Static Egress IP per Kubernetes Namespace in AKS

AKS now has a native equivalent to OpenShift’s

EgressIP: Static Egress Gateway. One dedicated gateway node pool + aStaticGatewayConfigurationCRD per namespace = stable egress IPs (public or private). No more separate node pools, subnets, and NAT Gateways per namespace.Pods opt in via annotation, IPs are stable across restarts/upgrades, supports public and private egress, and layers cleanly with Azure Firewall. Requires

aks-previewCLI extension andStaticEgressGatewayPreviewfeature flag. Private IP mode requires Kubernetes 1.34+.Full working demo: github.com/Ricky-G/azure-scenario-hub/tree/main/src/aks-unique-egress-ip-per-namespace

If you’ve used OpenShift’s EgressIP CRD to assign static egress IPs per namespace for firewall allowlisting, you know how critical this is for security compliance. The first question in any OpenShift-to-AKS migration is always: “How do we get per-namespace static egress IPs?”

Until recently, you needed a separate node pool, subnet, and NAT Gateway per namespace. Ten namespaces = ten of each. It didn’t scale.

AKS Static Egress Gateway fixes this: one gateway node pool, one subnet, one CRD per namespace.

graph LR

A["Namespace A"] --> GW["Gateway Pool"]

B["Namespace B"] --> GW

C["Namespace C"] --> GW

GW -->|"Unique IP per NS"| EXT["External Services"]